Jean-François Daoust, Richard Nadeau, Ruth Dassonneville, Erick Lachapelle, Éric Bélanger, Justin Savoie, and Clifton van der Linden

Mass compliance with recently enacted public health measures such as social distancing or lockdowns can have a definitive impact on coronavirus transmissions and, by extension, the number of COVID-19-related hospitalizations and deaths. It is thus fundamental to understand which groups comply with the mitigatory measures set forth by governments and public health officials. To gain such an understanding, it is of foremost importance that we have reliable and high-quality measures of compliance.

The available data to study compliance with COVID-19 public health measures come to a great extent from citizens’ self-reported behaviors. It is very likely that a social desirability bias affects respondents’ answers in surveys and decreases the quality of the data. To address this challenge, we tested different ways to measure compliance in surveys. More specifically, we tested three different versions of ‘face-saving’ strategies that were randomly allocated to Canadian respondents. Below, we focus on our third strategy, which was administered to a nationally representative sample of Canadians between 15 and 21 April 2020 (n=1,006).

This approach, that we find is the most efficient technique to reduce social desirability, makes use of a question that includes two crucial features, that is, a preamble rationalizing the non-compliant behaviors and a guilt-free answer choice. The estimates for this treatment, i.e. the face-saving question, are compared to the control group which was asked about their behavior through a direct question.

The direct question was simply: “Have you done any of the following activities in the last week?” The answer choices were “Yes/No/I don’t know” and referred to several activities including visiting someone else’s home, have someone over who does not live with the respondent, and get together outdoors with people who do not live with the respondent. The questionnaire included eight items, but here we focus on those three because they were clearly proscribed at the time of the survey.

The face-saving (guilt-free) question was:

“Some people have altered their behavior since the beginning of the pandemic,

while others have continued to pursue various activities. Some may also want

to change their behavior but cannot do so for different reasons.

Have you done any of the following activities in the last week?”

Moreover, the answer choices included “Yes,” “Only when necessary/occasionally,” and “No.”

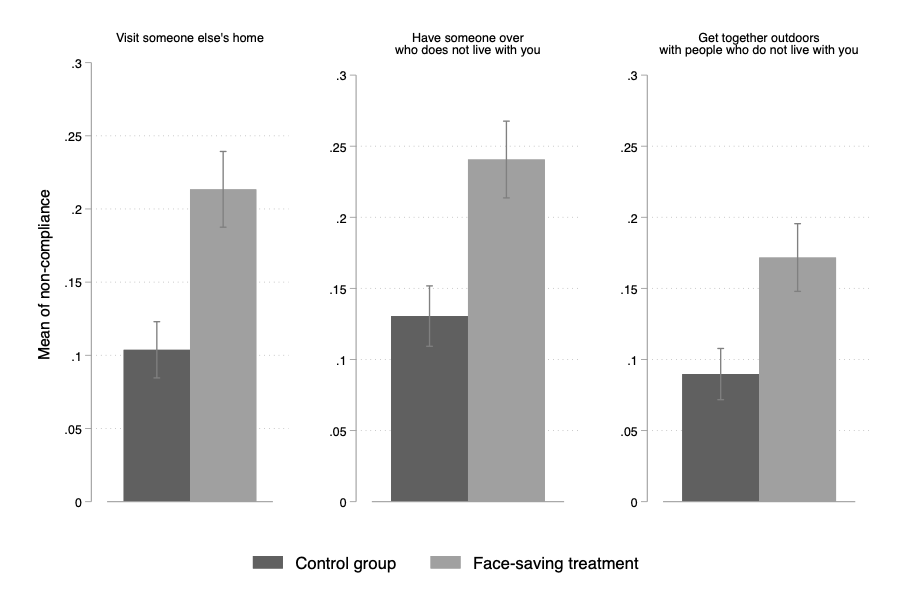

Figure 1 shows the results comparing this survey strategy with that of the control group. The face-saving strategy appears effective. On average, the effects are of +11 percentage points (seeing someone), +11 percentage points (having someone over) and +8 percentage points (getting together outdoors with people who do not live with you). For each of these survey items, thus, the share of respondents that admits they do not (always) comply with public health measures increases substantially. These effects are important in and of themselves, but they are even more important when we consider the baseline levels of admitted compliance. In fact, the face-saving treatment doubles our ability to identify the number of non-compliers.

Figure 1: Levels of non-compliance across the control and treatment group

Note: Means are shown with 84% confidence intervals

There has rarely been such an urgent need for methodological innovation to improve our understanding of human behavior and to measure human behavior well. If we want to be confident in our analyses of a variable such as compliance with social distancing or lockdowns, it is important to minimize the social desirability bias that is typically associated with measures of compliance with public health guidelines. We believe that we can do better than what the actual state of survey research is doing, and we developed face-saving strategies.

We argue that researchers and policymakers around the world should adopt the face-saving question. The cost of adding a very short preamble and a guilt-free answer choice is almost null and the benefits are clear: Researchers will enhance the validity of their measures, improve their descriptive inferences and be able to identify more non-compliers. In turn, this will enhance causal inferences and produce better analyses when the predicted outcome is compliance vs. noncompliance by getting closer to the true distribution of non-compliance.